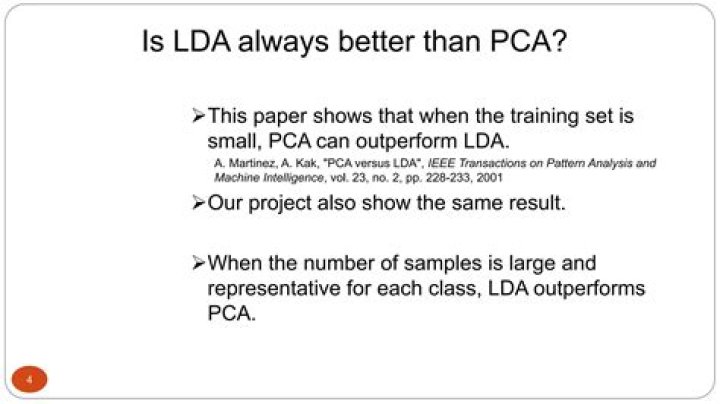

Is PCA better than LDA?

PCA helps reduce the ‘Curse of Dimensionality’ when modelling. LDA is for classification, it almost always outperforms Logistic Regression when modelling small data with well separated clusters. It’s also good at handling multi-class data and class imbalances.

What is the difference between LDA and PCA?

Both LDA and PCA are linear transformation techniques: LDA is a supervised whereas PCA is unsupervised – PCA ignores class labels. We can picture PCA as a technique that finds the directions of maximal variance: Remember that LDA makes assumptions about normally distributed classes and equal class covariances.

What is the most significant difference between PCA and LDA?

Both algorithms rely on decomposing matrices of eigenvalues and eigenvectors, but the biggest difference between the two is in the basic learning approach. Where PCA is unsupervised, LDA is supervised. PCA reduces dimensions by looking at the correlation between different features.

Is linear discriminant analysis machine learning?

Linear Discriminant Analysis or LDA is a dimensionality reduction technique. It is used as a pre-processing step in Machine Learning and applications of pattern classification. LDA is a supervised classification technique that is considered a part of crafting competitive machine learning models.

Does PCA maximize variance?

PCA is a linear dimension-reduction technique that finds new axes that maximize the variance in the data. The first of these principal axes maximizes the most variance, followed by the second, and the third, and so on, which are all orthogonal to the previously computed axes.

Is PCA supervised or unsupervised?

Note that PCA is an unsupervised method, meaning that it does not make use of any labels in the computation.

Where PCA implementation is highly useful?

PCA technique is particularly useful in processing data where multi-colinearity exists between the features/variables. PCA can be used when the dimensions of the input features are high (e.g. a lot of variables). PCA can be also used for denoising and data compression.

Is PCA orthogonal?

The PCA components are orthogonal to each other, while the NMF components are all non-negative and therefore constructs a non-orthogonal basis.

Is PCA linear or nonlinear?

PCA is defined as an orthogonal linear transformation that transforms the data to a new coordinate system such that the greatest variance by some scalar projection of the data comes to lie on the first coordinate (called the first principal component), the second greatest variance on the second coordinate, and so on.

What are the disadvantages of PCA?

Disadvantages of Principal Component Analysis

- Independent variables become less interpretable: After implementing PCA on the dataset, your original features will turn into Principal Components.

- Data standardization is must before PCA:

- Information Loss:

What is the critical principle of linear discriminant analysis?

The critical principle of linear discriminant analysis ( LDA) is to optimize the separability between the two classes to identify them in the best way we can determine. LDA is similar to PCA, which helps minimize dimensionality.

What is LDA (linear discriminant analysis)?

Linear Discriminant Analysis (LDA) is most commonly used as dimensionality reduction technique in the pre-processing step for pattern-classification and machine learning applications.

What is the difference between PCA and LDA?

LDA is similar to PCA, which helps minimize dimensionality. Still, by constructing a new linear axis and projecting the data points on that axis, it optimizes the separability between established categories.

Is LDA better dimensionality reduction technique than PCA for labelled data?

Therefore, we can conclude that LDA is better dimensionality reduction technique than PCA for labelled data. Note: reduced dataset using LDA gave same accuracy as original dataset i.e: 97%.