Is Thompson sampling better than UCB?

In particular, when the reward probability of the best arm is 0.1 and the 9 others have a probability of 0.08, Thompson sampling—with the same prior as before—is still better than UCB and is still asymptotically optimal.

Is Thompson sampling optimal?

A recent article by Russo [2] shows that Thompson sampling is a far from optimal allocation method to decide the best arm to play in the exploitation period.

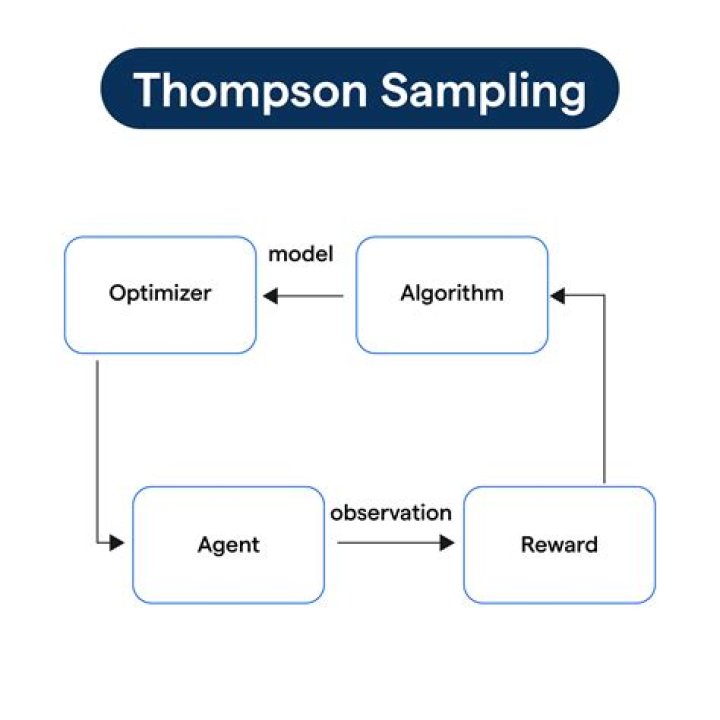

How does Thompson sampling work?

Thompson sampling is an algorithm for online decision prob- lems where actions are taken sequentially in a manner that must balance between exploiting what is known to maxi- mize immediate performance and investing to accumulate new information that may improve future performance.

What is the difference between UCB and Thompson sampling?

UCB-1 will produce allocations more similar to an A/B test, while Thompson is more optimized for maximizing long-term overall payoff. UCB-1 also behaves more consistently in each individual experiment compared to Thompson Sampling, which experiences more noise due to the random sampling step in the algorithm.

What is upper confidence bound algorithm?

The Upper Confidence Bound follows the principle of optimism in the face of uncertainty which implies that if we are uncertain about an action, we should optimistically assume that it is the correct action.

What is Bayesian bandit?

The Bayesian Bandits paradigm In a Bayesian paradigm, you use information you already know (priors) to make predictions about something you want to know. The term ‘bandits’ comes from a class of problems in probability that deal with variables that have ‘many arms,’ much like a row of slot machines on a casino floor.

Is Thompson sampling Bayesian?

Thompson sampling is a Bayesian approach to the Multi-Armed Bandit problem that dynamically balances incorporating more information to produce more certain predicted probabilities of each lever with the need to maximize current wins.

Where is Thompson sampling used?

Thompson Sampling has been widely used in many online learning problems including A/B testing in website design and online advertising, and accelerated learning in decentralized decision making.

What is UCB1 algorithm?

This ensures each action is tried infinitely often but still balances exploration and exploitation. Noel Welsh () Bandit Algorithms Continued: UCB1. 09 November 2010. 13 / 18.

What is UCB algorithm?

UCB is a deterministic algorithm for Reinforcement Learning that focuses on exploration and exploitation based on a confidence boundary that the algorithm assigns to each machine on each round of exploration. ( A round is when a player pulls the arm of a machine)

What is UCB RL?

The Upper Confidence Bound (UCB) Bandit Algorithm.

What is linear bandit?

We now introduce the notion of regret in the linear bandit setting. In general the notion of regret is used. to compare the performance of the optimal strategy with a bandit strategy. In stochastic problems we often. consider two notions of averaged regret: expected regret and the pseudo regret.

What is Thompson sampling and how does it work?

Thompson Sampling makes use of Probability Distribution and Bayes Theorem to generate success rate distributions. What Is Thompson Sampling? Thompson Sampling is an algorithm that follows exploration and exploitation to maximize the cumulative rewards obtained by performing an action.

Is Thompson sampling algorithm based on beta distribution?

In this analysis of Thompson Sampling algorithm, we started off with the Baye’s Rule and used a parametric assumption of Beta distributions for the priors. The overall posterior for each arm’s reward function was a combination of Binomial likelihood and Beta prior, which could be represented as another Beta distribution.

What is Thompson sampling in machine learning?

Thompson Sampling is an approach that successfully tackles the problem. Thompson Sampling makes use of Probability Distribution and Bayes Rule to predict the success rates of each Slot machine. Let us consider 5 bandits or slot machines.

How does Thompson sampling balance between exploration and exploitation?

This allows the Thompson Sampling to balance between exploration and exploitation based on the individual posterior Beta distributions of each arm. Arms that are not explored as often as others will definitely have a wider variance, which creates opportunities for it to be picked based on stochastic sampling.